Experience-based control does not scale in turbulent systems

Forecasting is one of the core management functions of any supply chain. For decades, it operated with remarkable reliability in many companies: experienced forecasters combined ERP recommendations with market knowledge, historical data and personal judgement. In relatively stable markets, this interplay of system logic and experience usually led to pragmatic and effective decisions.

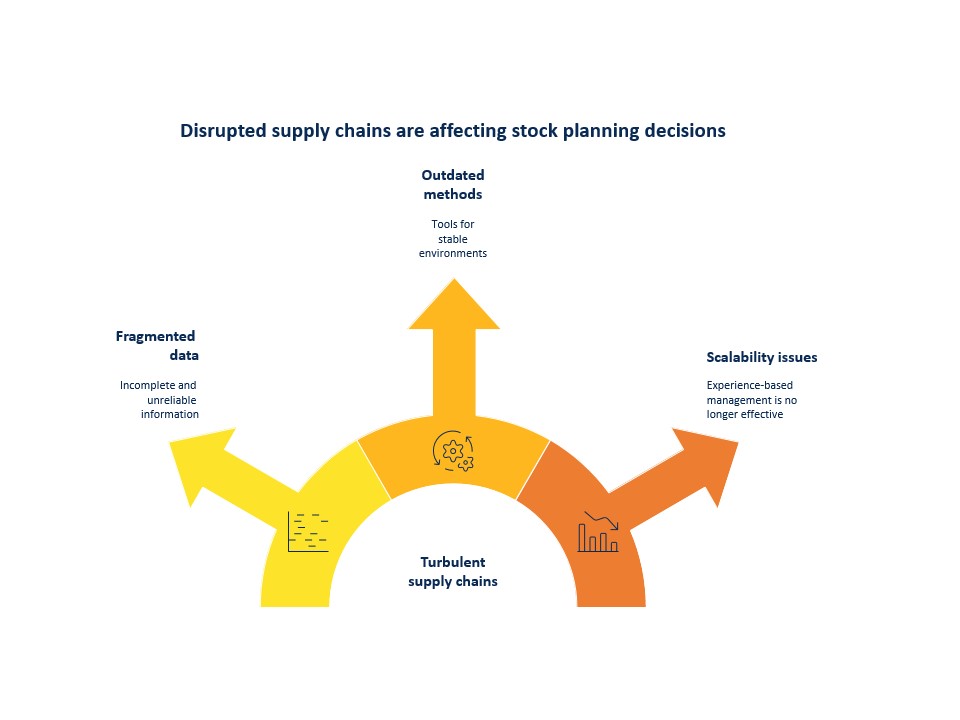

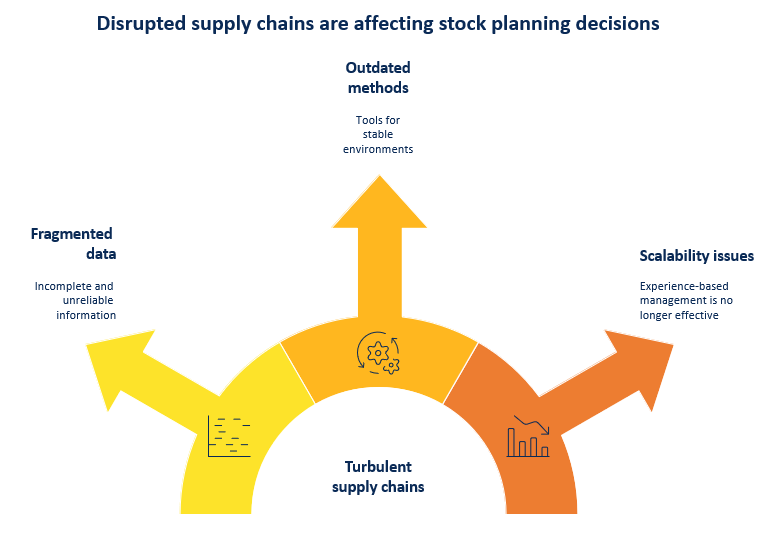

However, the operating environment has changed fundamentally. Supply chains have become more volatile, product variety and SKU numbers are rising, and external disruptions – from geopolitical conflicts to supplier failures – are occurring more frequently and are less predictable. Yet many planning processes continue to rely on tools and methods developed for a significantly more stable environment.

The result: replenishment decisions are often based on fragmented data, safety stock levels built up over time, poorly maintained system parameters and human decision-making heuristics. What worked in stable systems is increasingly reaching its limits under turbulent conditions. Experience-based control no longer scales in such environments.

From stable supply chains to turbulent systems

Traditional planning emerged at a time when conditions were relatively stable. In that environment, practical experience could compensate for many of the shortcomings of data and systems.

However, as complexity grows, the nature of the task changes fundamentally. Today, what matters is not so much the experience of individual planners as the ability to consistently map complex interrelationships.

Four structural patterns illustrate why many planning processes are finding this development increasingly difficult to cope with.

- Excel’s Parallel Worlds: When the Data Set Is Fragmented

In many companies, Excel remains the most important operational planning tool for production planning. Even where powerful ERP systems are in use, demand forecasts, stock overviews and scenario analyses are often handled in spreadsheets. Production, purchasing, sales and production planning work with their own files, which are regularly exchanged via email or shared drives. This creates parallel data silos.

This way of working has several structural weaknesses. Firstly, there is no shared, consistent database. As soon as a spreadsheet is sent, it is essentially already out of date. Changes made in one department often only become visible to other departments after a delay. Decisions are therefore often based on differing levels of information.

Secondly, spreadsheets are prone to errors. Formulas are adjusted, values are manually overwritten, or data is transferred incompletely. In complex planning spreadsheets, even a minor error is enough to distort stock trends or demand forecasts. In planning, such errors can have immediate operational consequences – such as incorrect order quantities, delayed procurement decisions or unnecessary stock build-ups.

Thirdly, Excel-based planning processes make collaboration difficult. When several people work on different versions of a file at the same time, inconsistencies and the need for coordination can easily arise. A significant proportion of planners’ working hours is then spent not on evaluating planning decisions, but on collecting, checking and consolidating data.

In relatively stable environments, this approach could be managed pragmatically for a long time. However, as supply chains become increasingly dynamic, the fragmentation of the data base is becoming a growing problem. The faster demand, lead times or stock levels change, the greater the impact that delayed or inconsistent information has on the quality of planning decisions.

This highlights a fundamental tension: whilst modern supply chains are becoming increasingly interconnected and dynamic, many planning processes continue to rely on tools that were originally developed for individual analyses rather than for the integrated management of complex systems.

2.Historically accumulated safety stocks

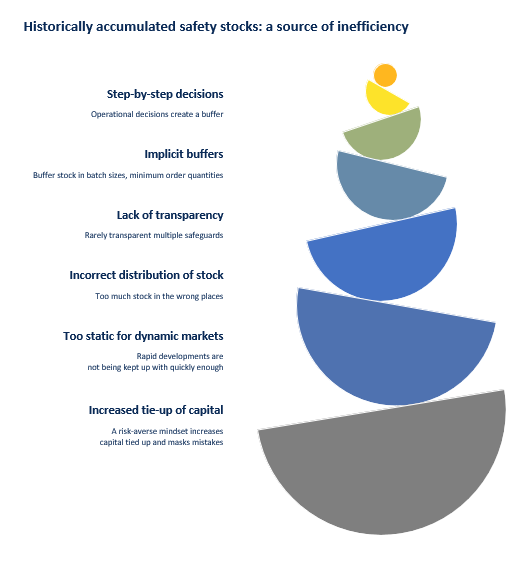

A second structural pattern found in many planning systems is safety stock, which is not systematically planned but has built up over the years. In practice, it often arises gradually as a result of operational decisions: the sales department increases minimum stock levels for key items, the planning department responds to delivery uncertainties by adding extra buffers, and the procurement department extends lead times or batch sizes as a precaution.

Each of these measures appears plausible in its own right. Taken together, however, they create multiple, often invisible layers of safety stock throughout the entire supply chain. Safety stock is recorded in the ERP system, whilst additional implicit buffers arise in batch sizes, minimum order quantities or brought-forward order dates. The result is multiple layers of protection that are rarely transparent.

Many companies only realise, upon systematic analysis, that a significant portion of their stock has accumulated historically and was never actively planned. Often, there is too much stock of the wrong items in the wrong places along the supply chain.

In stable markets, such safety reserves have long served as a pragmatic safeguard against uncertainty. In more dynamic supply chains, however, they are increasingly giving rise to new problems. High stock levels tie up capital, mask planning errors and delay the response to changes in demand.

This creates a paradoxical effect: safety stocks are intended to cushion uncertainty – but in complex systems they can themselves become a source of additional inefficiency.

3. Scheduling rules that nobody maintains anymore

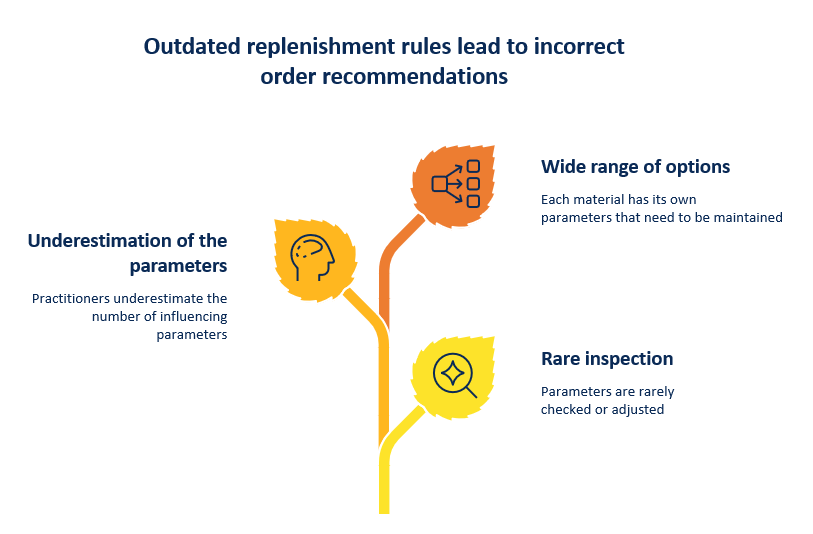

A third problem lies less in the tools themselves than in the maintenance of the underlying planning rules. Modern ERP systems operate using a wide range of parameters – such as lead times, batch sizes, forecasting methods and safety stock levels. These parameters play a decisive role in determining which order proposals the system generates.

In practice, however, many of these settings are rarely reviewed or adjusted. They often date back to the ERP system’s implementation phase or to earlier market conditions, and subsequently remain unchanged for years. At the same time, however, demand patterns, lead times and product ranges are constantly changing. This gradually creates a growing gap between the assumptions in the system and reality.

Added to this is a second, less obvious point: many practitioners underestimate the actual number of parameters that influence the planning logic of an ERP system. In many planning departments, there is a perception that essentially only a few control variables – such as safety stock, lead time, batch size or forecasting methods – need to be maintained regularly.

In reality, however, modern ERP systems have a significantly larger number of possible control parameters. Depending on the system, several dozen, and in some cases well over a hundred settings, can influence the planning logic – ranging from forecasting methods and service level parameters to rounding rules, planning time windows or planning strategies. Many of these parameters were set to standard or default values once during the system’s implementation and remain unchanged thereafter, even though they do not necessarily fit the respective material structure or demand behaviour.

The range of parameters that actually need to be checked to ensure accurate planning logic is therefore considerably wider than many users assume in practice.

As the range of variants grows, this problem becomes even more acute. Every material has its own MRP parameters, which, in principle, should be reviewed regularly. As the number of items increases, so does the number of parameters that need to be maintained – often without any additional resources being made available for this purpose.

The result is a creeping divergence between system logic and reality. Planning systems operate on the basis of assumptions regarding demand trends, lead times or batch sizes that are no longer valid. Order proposals are then calculated correctly in formal terms, but are based on outdated premises.

This highlights a fundamental scaling problem: the central issue is not the algorithm itself, but the manual management of its parameters. The more complex product ranges and supply chains become, the more difficult it is to keep these rules consistent and up to date.

4. Gut feeling as a guiding principle

In addition to data fragmentation, legacy data and poorly maintained replenishment rules, another factor plays a major role in many companies: human judgement. Planners regularly intervene in system recommendations, adjust forecasts or alter order quantities based on their experience.

In principle, this intervention is understandable. In many situations, practitioners possess contextual knowledge that is not reflected in the system – such as information about upcoming market campaigns, supplier issues or short-term fluctuations in demand. Experience can therefore be a valuable component of planning.

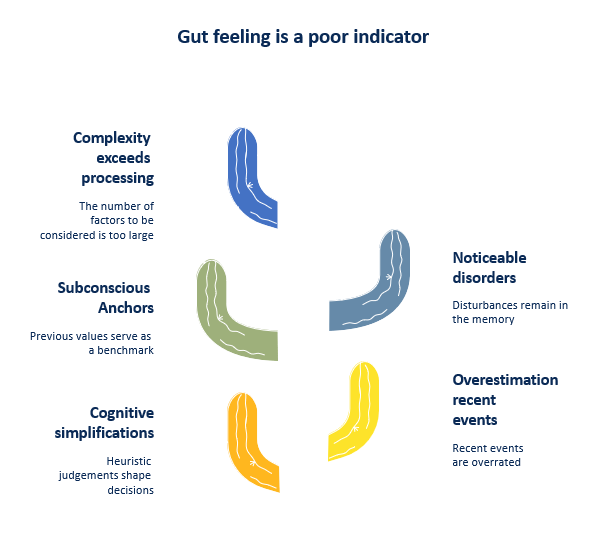

However, problems arise when human intervention systematically replaces analytical planning. Decisions are then often shaped by heuristic assessments: recent events are overvalued, past values serve as unconscious anchors, and particularly striking disruptions remain more firmly in the memory than statistically typical developments.

Such cognitive simplifications are often unproblematic in manageable systems. However, as complexity increases, they reach their limits. When thousands of items, volatile demand and international supply chains must all be taken into account simultaneously, the number of relevant factors quickly exceeds what experience-based assessments can reliably process.

Experience therefore remains important – but it loses its ability to steer a complex system on its own. In turbulent supply chains, gut instinct cannot replace analytical planning, but can at best complement it.

When weaknesses reinforce one another

In practice, the problems described rarely occur in isolation. Rather, they often occur simultaneously – and reinforce one another.

Fragmented data makes consistent planning difficult. To compensate for this uncertainty, planners often intervene manually or increase stock levels as a precaution. Safety stock levels that have built up over time, in turn, mask planning errors and delay feedback from the system. At the same time, planning algorithms operate using parameters that no longer match the current market situation.

This creates a self-reinforcing mechanism: unreliable data leads to cautious decisions, additional safety stock masks the actual causes of planning deviations, and manual interventions override systematic analyses. Each individual measure appears plausible in day-to-day operations – but taken together, they increase the complexity of the system.

The result is a planning system that operates in an increasingly reactive manner. Instead of dampening fluctuations in demand, these are often amplified along the supply chain. Small changes in demand can thus trigger disproportionate reactions in orders, production volumes or stock levels.

The four patterns described – fragmented data, legacy security systems, neglected provisioning rules and heuristic interventions – are therefore not merely individual vulnerabilities. Together, they form a system that becomes increasingly difficult to manage as complexity grows.

Conclusion: A system from a bygone era

The four patterns described reveal a common underlying problem: many planning processes are still based on an understanding of planning that was developed for a significantly more stable environment. In manageable systems, experienced planners were long able to compensate for data gaps, imprecise parameters or incomplete models through their practical knowledge.

However, as complexity grows, this model reaches its limits. Increasing product variety, dynamic markets and globally interconnected supply chains increase the number of influencing factors to such an extent that experience-based management alone is no longer sufficient. The problem lies less with the people involved than with the structure of the systems they work with.

The crucial question for companies is therefore not whether their planners are committed or experienced enough. Rather, the decisive factor is whether the planning mechanisms in use are still suited to the current complexity of supply chains.

A subsequent article will examine which approaches can help make planning more scalable again in such environments.

FAQ – Frequently Asked Questions

Is manual planning fundamentally wrong?

No. Manual planning isn’t wrong; in fact, it was very effective for a long time.

The problem is that it was designed for less complex and more stable systems and no longer scales adequately under today’s conditions.

Why is Excel a problem in production planning?

Excel is not a problem as a tool in itself, but rather in the way it is used:

- it creates parallel data silos

- it prevents real-time transparency

- it is prone to errors

- it hinders cross-departmental collaboration

In complex systems, this leads to systematic inconsistencies.

Why is there so much surplus stock?

Excess stock is rarely caused by a single wrong decision, but rather by:

cumulative safety margins across different departments

implicit buffers (batch sizes, deadlines, minimum quantities)

a lack of transparency regarding the overall situation

The system ‘over-insures’ itself.

Why is Excel a problem in production planning?

Excel is not a problem as a tool in itself, but rather in the way it is used:

- it creates parallel data silos

- it prevents real-time transparency

- it is prone to errors

- it hinders cross-departmental collaboration

In complex systems, this leads to systematic inconsistencies.

Is the problem down to the ERP systems?

No. ERP systems are generally capable of performing well.

The problem lies in the fact that:

parameters are not updated regularly

many parameters are set incorrectly or inappropriately

the complexity of parameterisation is underestimated

→ The system calculates correctly – but on the wrong basis.

Why do dispatchers intervene manually so often?

Because they:

- possess contextual knowledge that is lacking in the system

- find the system’s results implausible

- are responsible for ensuring delivery

The problem is not the intervention itself, but rather:

the systematic overriding of analytical results by heuristic judgements

Will automation make planning redundant?

No. The role is changing.

In future, it will be less about:

- manual ordering decisions

and more about:

- monitoring systems

- interpreting results

- managing exceptions

Why do the problems reinforce each other?

Because they are interlinked:

- poor data → more manual intervention

- more intervention → more safety stock

- more stock → less transparency

- poor parameters → incorrect system recommendations

→ a self-reinforcing cycle

What is the main point of the article?

The challenges facing planning today do not lie in individual shortcomings, but in the interplay of a system that was designed for a different level of complexity.